AI search histories reshape crime investigations

AI search histories reshape crime investigations

Posted February. 27, 2026 08:44,

Updated February. 27, 2026 08:44

“Would police investigate a stock investment chatroom scam if I deposit stolen money into a victim’s account?”

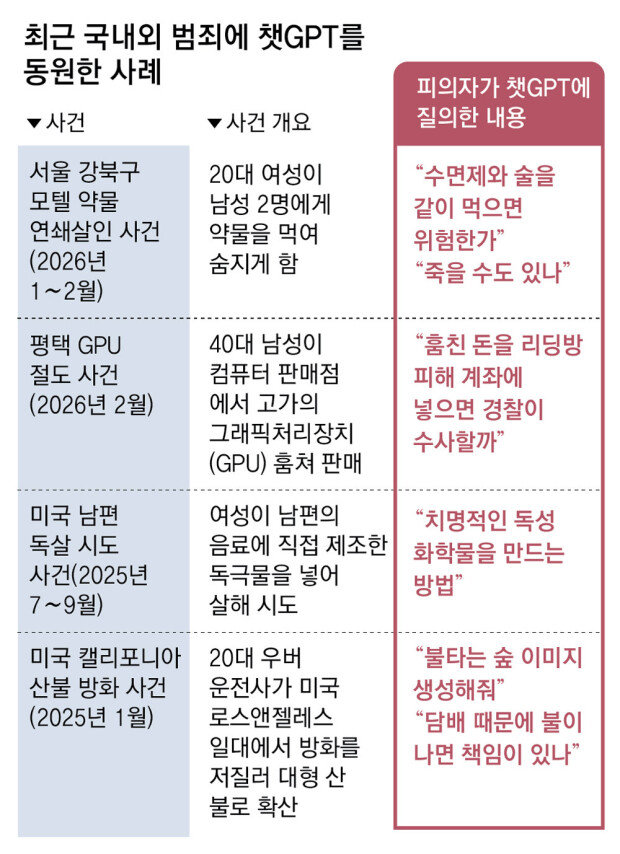

At about 6 a.m. on Feb. 22, a man in his 40s broke into a computer parts store in Pyeongtaek, Gyeonggi Province, and stole high-end graphic processing units worth tens of millions of won. Before carrying out the theft, he put that question to ChatGPT.

The chatbot replied that if he were arrested on theft charges, police would also look into the chatroom fraud case. After being apprehended, the man told investigators he acted after reading the AI’s response, hoping authorities would move swiftly to investigate the investment scam in which he had suffered losses. He said he believed that even if the theft came to light, police might ultimately resolve the fraud that had cost him money.

Investigators say exchanges between suspects and artificial intelligence services are increasingly becoming pivotal evidence in criminal cases. AI search histories show more than the keywords a suspect entered. They can illuminate a person’s thinking, intent and state of mind at the time. Because such queries are text-based, even partially deleted records can often be largely recovered through digital forensics, making them especially valuable in investigations, experts say.

● AI as accomplice, revealing motive

In a recent motel drug-related serial killing case in Seoul’s Gangbuk District, police upgraded the charge against a suspect surnamed Kim from injury resulting in death to murder after examining her ChatGPT query history.

A forensic review of Kim’s mobile phone found that she had repeatedly asked the chatbot about the dangers of drugs, including how much would be hazardous and whether certain amounts could prove fatal. Investigators determined that the pattern of questions bolstered evidence of intent.

Comparable cases have emerged overseas. In October last year in North Carolina, a woman was arrested on suspicion of attempting to poison her husband after seeking information from ChatGPT about toxic substances. That same month in Los Angeles, a suspect accused of igniting a major wildfire was found to have asked the chatbot to generate images of a burning forest before the blaze. Shortly after the fire, he also inquired whether he would be responsible if a blaze started while he was smoking. In each instance, chat records with an AI service served as circumstantial evidence pointing to motive.

Investigators say AI conversation histories offer a different depth of insight than conventional portal search logs. Rather than isolated keyword entries, AI exchanges unfold as interactive dialogues that progressively refine information. That back-and-forth leaves a detailed trail, allowing authorities to reconstruct a user’s interests and reasoning more precisely.

Chung Doo-won, a forensic science professor at Sungkyunkwan University, said ChatGPT conversations tend to reveal the user’s objective more directly, making them particularly significant in criminal investigations.

● Investigators step up forensic recovery efforts

In practice, investigators are already analyzing AI search histories, including ChatGPT records, in a wide range of cases.

Lee Min-hyung, a specialist at Yulchon LLC, cited a case last fall in which conversations with ChatGPT recovered from a deceased person’s phone contained indications of depression. Those exchanges helped authorities piece together the circumstances surrounding the death. When a person’s intent cannot be directly verified, AI chat records can serve as meaningful circumstantial evidence, he said. Lee added that reviewing AI search histories has become a routine part of many investigations.

Another distinguishing feature is the relatively high probability that AI search records can be recovered through forensic techniques. Unlike video or image files, which are often difficult to restore once damaged, text-based data are comparatively easier to retrieve, even after deletion.

Lee Jun-hyung, head of Playbit, said recovery rates vary depending on the situation but are generally high for text data. Even exchanges conducted with an AI service several years ago are likely to be recoverable, he said.

Legal scholars say ChatGPT query records are not fundamentally different from conventional search histories when it comes to admissibility in court.

Kwon Yang-seop, a law professor at Kunsan National University, said that just as Google search terms have been accepted as evidence, entering prompts into ChatGPT could be interpreted in a similar legal framework. The key question, he said, is whether the individual in question actually entered the prompts. When combined with other evidence, such as phone usage logs, the likelihood that such records will be admitted increases.

Experts caution that as investigative authorities advance AI forensic capabilities, criminals may turn to so-called anti-forensic tactics to evade detection, intensifying the race between tracking and concealment.

Suspects may increasingly rely on private browsing modes, promptly delete records or even format devices to avoid leaving traces when using ChatGPT for illicit purposes. Chung said that as the effectiveness of AI forensics becomes more widely recognized, more offenders are likely to look for ways to circumvent scrutiny. He added that law enforcement agencies must continue upgrading their forensic expertise to keep pace.

조승연 기자 cho@donga.com

![[단독]정동영 “北 구성 핵시설” 발언… 美 “정보공유 제한 방침”](https://dimg.donga.com/c/138/175/90/1/wps/NEWS/IMAGE/2026/04/17/133757485.1.png)

![[사설]정동영 정보 누설에 美 ‘공유 제한’… 대북 조급증이 부른 불신](https://dimg.donga.com/c/138/175/90/1/wps/NEWS/IMAGE/2026/04/16/133757192.1.png)