Science journals ban listing of ChatGPT as research paper author

Science journals ban listing of ChatGPT as research paper author

Posted February. 22, 2023 07:47,

Updated February. 22, 2023 07:47

“Ultimately the product must come from the wonderful computer in our heads,” said Holden Thorp, Editor-in-Chief of the Science.

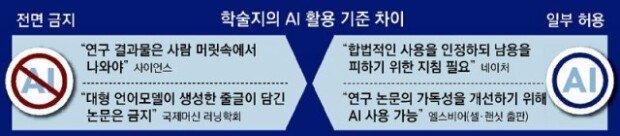

In an editorial published on Tuesday, Mr. Thorp wrote that “machines play an important role,” but they are ultimately one of the tools for “the human endeavor of struggling with important questions.” The Science has restricted the use of quotes from paragraphs generated by an advanced AI-driven chatbot, such as ChatGPT, as well as the listing of these AI programs as a co-author of research papers. Thorp pointed out that using ChatGPT to write and submit theses to academic journals is equivalent to taking shortcuts using image manipulation programs such as Photoshop. “At a time when trust in science is eroding, it’s important for scientists to recommit to careful and meticulous attention to details,” Thorp highlighted. The International Conference on Machine Learning (ICML) also announced that academic papers using the Large Language Model (LLM) would be banned.

However, other academic journals, such as Nature, take on a slightly different position from the Science and the ICML. Magdalena Skipper, Editor-in-Chief of Nature, wrote in an editorial published on Jan. 24 that academic journals must allow the lawful use of AI-driven chatbots, yet they must provide a clear standard to prevent AI programs from being misused. This view is based on the idea that, given that the utilization of ChatGPT has become an irresistible trend, the focus should be on providing guidelines on its appropriate use rather than banning the use itself. Academic journals, including Cell Press, the Lancet, and eLife, have also suggested similar viewpoints.

warum@donga.com

![“장동혁, 美 의회 앞 사진으로 영원히 고통받을 것”[정치를 부탁해]](https://dimg.donga.com/c/138/175/90/1/wps/NEWS/IMAGE/2026/04/17/133758458.3.jpg)