KAIST research team launches deepfake-detecting mobile app

KAIST research team launches deepfake-detecting mobile app

Posted March. 31, 2021 07:24,

Updated March. 31, 2021 07:24

A mobile application that can detect deepfakes, computer-manipulated images that are used to produce fake news and pornography, has been developed for the first time in Korea.

A research team at the Korea Advanced Institute of Science and Technology (KAIST) led by Professor Lee Heung-kyu and KAIST start-up Digital Innotech said on Tuesday that they launched the mobile app version of the software KaiCatch that can detect deepfake images and videos using an neural network.

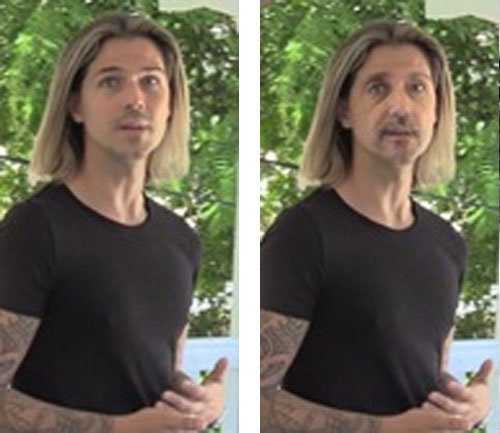

Deepfake is a technology that replaces or digitally manipulates a real person’s face or certain parts of the body using artificial intelligence (AI). With deepfake technology, a person’s face can be swapped with someone else’s, the faces of celebrities can be mapped onto someone else, or some parts of face can be changed. There have been many cases, where the technology was used to produce fake news or pornography, infringing on human rights of victims.

If you upload videos to the app in avi or mp4 formats, the app splits them into individual frames, converts them into images, and determines whether they are fake or not. The app detects traces of digital tampering and looks for geometric distortions in nose, mouth, and facial contours. You can also capture a frame from a video, covert it into an image, and upload it to the app to determine if it is forged or tampered. If you upload digital images in various formats, such as BMP, IF, TIFF, PNG, or JPEG, the app provides an analysis of the image, where areas suspected of forgery or tampering are marked in different colors.

Professor Lee said the app can detect deepfakes with a reliability of around 90 percent even if unpredictable or unknown deepfake techniques are used.

The app can be downloaded for free but cost is incurred if users request for analysis. Users can receive an analysis report within three days after uploading a video or an image. The app is currently only available for Android devices, but will soon be available for iOS devices as well.

shinjsh@donga.com